METHODOLOGY

SELECTION OF GEOGRAPHIC CONTENT

During the content selection process, the author placed a high importance on current geographical events and specifically highlighted the role of the Copernicus program in addressing climate change. With guidance from supervisor, the author ultimately decided to establish the COPERNICUS MUSEUM environment, which aims to educate and create awareness about Sea Level Rise and the significance of the Sentinel-6 mission. To achieve this goal, the museum's geographic content was carefully chosen and includes European Space Research and Technology Centre (ESTEC), Falcon 9 rocket, Sentinel-6 satellite, Ocean, Globe, and Venice city. Moreover, each of these geographic exhibits accompanied by corresponding video materials. These exhibits chosen as Areas of Interest (AOIs) in COPERNICUS MUSEUM environment.

HARDWARE

Varjo XR-3 eye-tracking headset and the HTC Vive controllers were used to present the COPERNICUS MUSEUM environment to participants.

Varjo XR-3 is specifically engineered to provide optimal comfort for users of all head sizes and shapes, even during extended immersive experiences, thanks to its 3-point precision fit headband. With features such as automatic IPD adjustment, active cooling, a smooth 90 Hz frame rate, and advanced non-Fresnel lenses that offer a wide field of view and exceptional clarity, users can work without experiencing any discomfort or motion sickness. Additionally, Varjo XR-3 headsets are compatible with a wide selection of Windows 10 and 11 computers. The content (COPERNICUS MUSEUM environment) was displayed through a desktop computer with an Intel Core i7-8700 CPU, 64GB RAM, an NVIDIA GeForce RTX 2080 GPU, and a 64-bit Windows 10 operating system.

HTC Vive controllers were employed to enable users to actively engage with the virtual realm. It incorporates various sensors like motion trackers, a gyroscope, and an accelerometer, which allow accurate monitoring of the controller's location and orientation within the virtual reality space. This precise tracking enhances the overall experience by ensuring smooth and immersive interaction for users.

SOFTWARE

The virtual geographic learning environment was primarily developed using Unreal Engine 4.27. This software was utilized to build COPERNICUS MUSEUM environment and establish a connection to Varjo software by employing Blueprints and Varjo OpenXR plugin.

Blender 3.5 was employed to create the 3D model of ESTEC and Globe. Blender offers powerful 3D modeling capabilities, allowing for the creation of detailed and realistic models. The visual appearance of the models was enhanced by applying textures within Blender.

SketchUp Pro 2022 was utilized to generate the 3D models of the Falcon 9 rocket and Sentinel-6 satellite. SketchUp Pro is known for its intuitive interface and ease of use, making it suitable for creating accurate and visually appealing models. Textures were applied to these models to achieve a realistic view.

CityEngine was used to import 3D model of Venice city developed by Esri. CityEngine specializes in procedural modeling and urban planning, enabling the efficient generation of complex cityscapes.

Datasmith plugin in Unreal Engine 4.27 were enabled to facilitate the import of models from SketchUp Pro 2022.

Quixel Bridge plugin was enabled to access Quixel Bridge's assets, which provide a library of high-quality textures and materials for enhancing the visual quality of the virtual environment. Once the Copernicus Museum was prepared, the subsequent task involved utilizing the VARJO XR-3 VR Headset to collect eye-tracking data. To accomplish this, the Varjo Lab Tools software was employed for masking purposes, while the eye-tracking data from the VR environment was gathered using the Varjo Base software. The calibration process for eye-tracking was conducted within Varjo Base by wearing the VR Headset.

Microsoft Visual Studio 2022 was utilized as the primary tool to analyze the eye-tracking data stored in a CSV file, employing the Python scripting language.

Statistical Package for the Social Sciences (SPSS) used to conduct in-depth analysis.

WORKFLOW

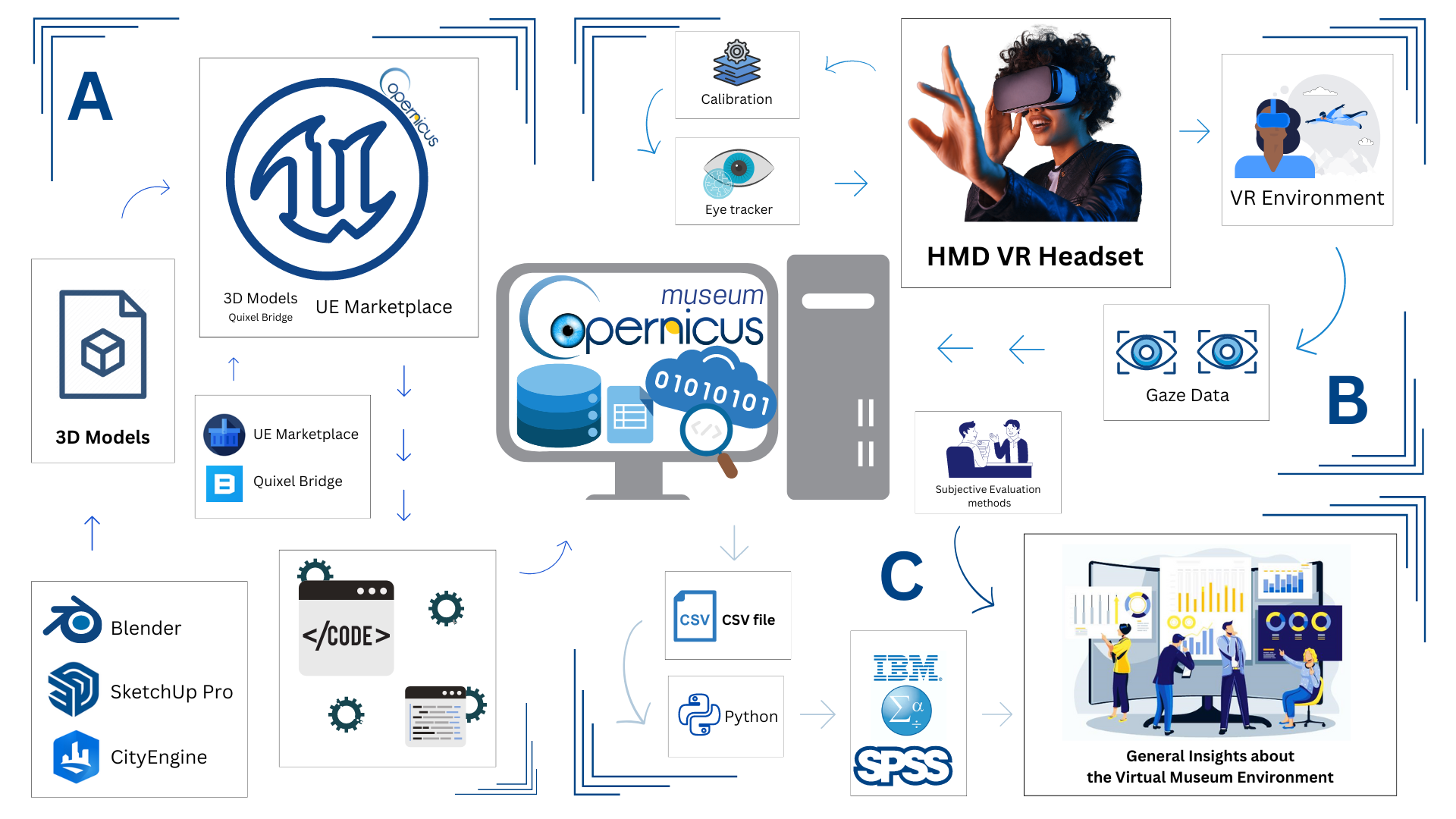

The study's workflow presented in Figure below, providing a visual representation of the various stages involved. Section A focuses on the Development of the Virtual Geographic Environment, outlining the specific steps and processes involved in creating it. Development of Virtual Reality Environments methodologies adopted from the studies of Govea & Medellín-Castillo (2015) and Lucas (2020). This environment was then connected to a VR headset using Blueprint Visual Scripting system. The Blueprint Visual Scripting system within Unreal Engine presents a comprehensive gameplay scripting approach, relying on a node-based interface within Unreal Editor to construct various gameplay components. Much like conventional scripting languages, it serves the purpose of defining object-oriented classes or objects in the engine (Unreal Engine, n.d., Introduction to Blueprints). Section B encompasses the sequential steps involved in the workflow, which begins with the calibration of the eye-tracking and extends to participants experiencing the COPERNICUS MUSEUM environment while concurrently collecting eye-tracking data. The workflow in Section B was modified differently from the gaze-based interaction scheme for VR environments proposed by Piotrowski & Nowosielski (2020). Section C details the workflow for analyzing the data obtained from the Virtual Environment. The SPSS analysis was conducted following the methodology outlined by Kim & Lee (2021). This analysis aims to uncover general insights about the environment, possibly related to user behavior, preferences, and patterns observed during the participants' virtual experiences.

Thesis Workflow